An Introduction to Denodo for Data Virtualization

We are living in an era in which enterprise data exists in more forms than many IT departments know what to do with. This includes structured and unstructured data, emails, logs, and more, stored in many unique locations. So how do we get a unified overview of the far-flung data and manage it in all its disparate forms? To overcome this complexity, we use data virtualization, an umbrella term used to describe any approach to master data management that allows for retrieval and manipulation of data irrespective of its location and format.

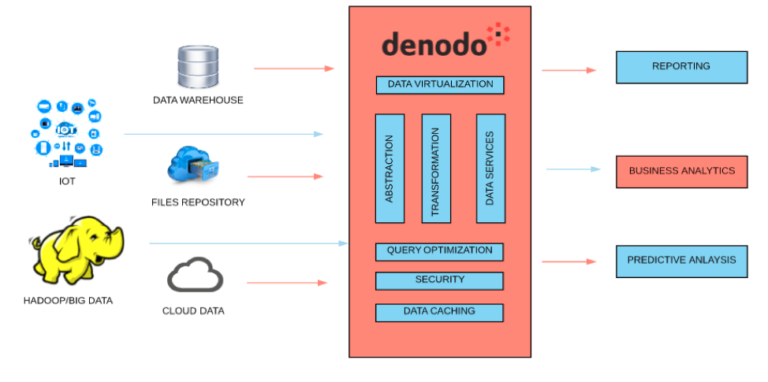

With technology evolving faster, it’s important to extract instantaneous information to make real-time business decisions. Organizational data is likely to occupy many forms, including cloud data, big data, and social media and web data, to name a few. IT teams struggle with the continual addition of new data sources continuously through initiatives like cloud-first, app modernization, and big data analytics. They rely on traditional integration techniques which are resource-intensive, time consuming, and costly. Introducing Denodo’s platform for data virtualization may help us to overcome, or at least mitigate, the existing complexity. It’s the fastest way to access all the enterprise data without worrying about potential roadblocks that may arise when pulling data from disparate sources. The data virtualization offers a single point of access to the entire enterprise data without the need to move it to central repository.

This paper will help you to understand the key capabilities of Data Virtualization.

Why Data Virtualization?

- Data is neither moved nor replicated when performing Data Virtualization. This makes the process of retrieving data much faster, saving users time and money.

- It is much easier for heterogeneous data to integrate and interact through Data Virtualization because the data remains in its original location. It also bridges the semantic difference between structured and unstructured data.

- Overall data quality improves and data management becomes easier.

- It provides for more agile BI.

Data Virtualization Tools

There are multiple data virtualization platforms available. In this paper, we will use Denodo as the example to illustrate the characteristics benefits of data virtualization.

- Data Current

- Denodo

- Red Hat JBoss Data Virtualization

- TIBCO Data Virtualization

- Oracle Data Service Integrator

Denodo’s Data Virtualization Tool

The Denodo Platform offers the benefits of data virtualization including the ability to provide real-time access to integrated data across an organization’s diverse data sources, without replicating any data. It offers advanced features like Dynamic Query optimizer, advanced caching, and self-service data discovery in fastest way possible.

How It Works

Configuring Multiple Data Sources to Denodo’s Virtualization Platform

At HEXstream, we connect multiple data sources to Denodo’s Data Virtualization Platform, a few of which are mentioned below.

- Spark-sql and Hive

- Oracle

- NoSQL Database (Mongo DB and HBase)

- XML Files

- Azure Blob Storage.

Denodo’s flexibility in connecting to a wide variety of sources allows organizations to gain a more complete view of their enterprise data, paving the way for stronger analytics capabilities. In a future paper, we will illustrate how to connect different sources using Denodo’s Data Virtualization Tool and how to perform ETL and data analytics.

Conclusion

The fact that data is neither moved nor replicated makes Data Virtualization a reliable method for data integration, making the data retrieval process much faster and saving users time and money. It enables users to mask sensitive data using data virtualization tools, which makes it a reliable choice for analyzing sensitive data. Organizations stand to benefit from incorporating data virtualization into their existing data operations in order to bridge the gaps between disparate data types and sources.

Let's get your data streamlined today!

Other Blogs

A New Imperative for Utilities to Manage their Unbilled Revenue

“While regulators generally allow utilities to recover prudently incurred costs from ratepayers, utilities are always cognizant of the effect rising c

Unleashing the Benefits of Real-Time Maximo-OFS Integration in Utilities

Maximo and Oracle Field Service (OFS) Integration for Real-Time Data: How the Utilities May Benefit.

Water Affordability 101

Affordable access to clean, safe water is a fundamental requirement of human well-being. We depend on water not only to drink, but to cook, bathe, san